It is very hard to get very far in any sort of “end of year” evaluation of legal ethics questions without talking about the rise of generative AI, how to use it ethically, and what its rapid (and continuing) development will mean for the practice of law.

I’ve written earlier this year about the unfortunate (and unnecessary) immediate trend among judges, as something of a backlash to a high-profile instance of lawyers misusing generative AI and then lying about it, to enact certification requirements for lawyers.

It seemed, for at least a little bit, that such a trend might have petered out.

Unfortunately, it does not seem that way at the moment as the Fifth Circuit has become the first federal appellate court to join the fray, circulating a proposed certification requirement for proposed comment.

If approved, lawyers filing anything with the Fifth Circuit would have to add a certification along the following lines:

Additionally, counsel and unrepresented filers must further certify that no generative artificial intelligence program was used in drafting the document presented for filing, or to the extent such a program was used, all generated text, including all citations and legal analysis, has been reviewed for accuracy and approved by a human.

Now, would enacting this be the worst thing the Fifth Circuit has recently done? No, of course not. That would probably be the decision that publishing information about issues involving wellness, diversity, and inclusivity isn’t within the mission of a state bar association so as to violate the First Amendment rights of its members.

But it would still be a bad thing because such certifications continue to be both unnecessary and driven by a lack of understanding about just how rapidly the technology involved is changing. Specifically, this kind of certification requirement is not likely being floated by someone who understands that even if you try to misuse ChatGPT to “research” case law for you, you can’t because new guardrails were added to a not insignificant degree because of the New York case that raised such negative publicity for all involved. It also likely isn’t being floated by someone who understands that more and more products are being introduced monthly (if not weekly it seems) from players in the legal research industry that do harness generative AI along with legal research databases to provide for reliable ways to combine the power of something like ChatGPT with access to real case law. (See for example: CaseText, or Lexis + AI)

Now, you may be saying to yourself “But didn’t I just read an article about a Colorado lawyer who also got in trouble because he used Chat GPT and filed a brief containing fake cases with the court?” And if you are saying that to yourself, then you are correct — to a degree – but whatever story you read didn’t highlight for you: (1) the conduct in question actually occurred back in May 2023 (which is not too different temporally from the conduct of the New York lawyers in the famed Avianca case; and (2) that lawyer also (also like the New York lawyers) really got into trouble more for lying after the fact about what they had and had not done.

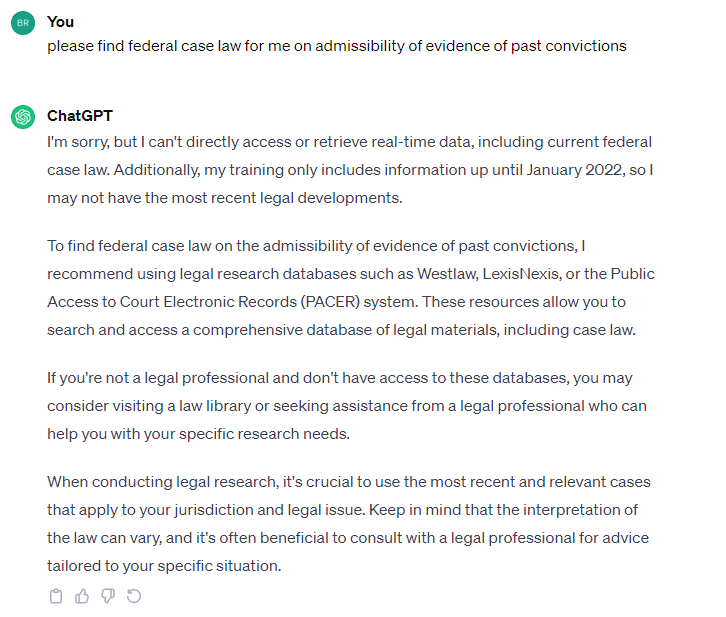

Today, December 12, 2023, (and this has been true for a while now), if you ask ChatGPT to find case law for you in support of a legal argument it will go something like this:

https://chat.openai.com/share/445d6eef-9e98-40cc-bd1c-ecaa06627345

In the event that the Fifth Circuit doesn’t scrap its plan, you could certainly use ChatGPT to quickly draft the compliance certificate for you. Bonus points if doing that becomes the only reason you have to acknowledge using generative AI at all.